The Engineer Who Tuned a Car for a Test#

The engine management software of a 2.0-litre TDI diesel engine in a 2012 Volkswagen Jetta had a feature. The feature was not in the owner's manual. It was not described in any regulatory filing, any NHTSA certification document, or any EPA compliance submission. It existed in a firmware module embedded in the Engine Control Module (ECM), and it did one thing: it detected, based on steering angle, accelerator pedal behaviour, ambient pressure, and vehicle speed profiles, whether the vehicle was being driven in the specific conditions that characterised a US EPA emissions certification test. When those conditions were detected, the engine management system activated a calibration mode that substantially reduced NOx emissions by increasing exhaust gas recirculation and operating the selective catalytic reduction system at high efficiency — at the cost of slightly increased fuel consumption and reduced power output.

In normal driving — on roads, in cities, under varying throttle and speed conditions — the ECM deactivated that calibration mode and operated the engine in a mode optimised for performance and fuel economy, in which NOx emissions were approximately 10 to 40 times higher than the certified test result. The engine was not, in any functional sense, the engine that had been certified. It was certified as one device and used as another. The feature is now known generically as a defeat device.

The CARB and EPA announcement of the defeat device in September 2015 initiated what became the largest automotive regulatory scandal in modern history. Volkswagen ultimately paid approximately $33 billion in fines, settlements, and remediation costs across US, European, Canadian, and other jurisdictions. Eleven executives faced criminal charges. The company's US market share declined significantly for multiple years. The scandal is routinely cited as an example of corporate misconduct, of regulatory failure, and of the gap between automotive industry intentions and reality.

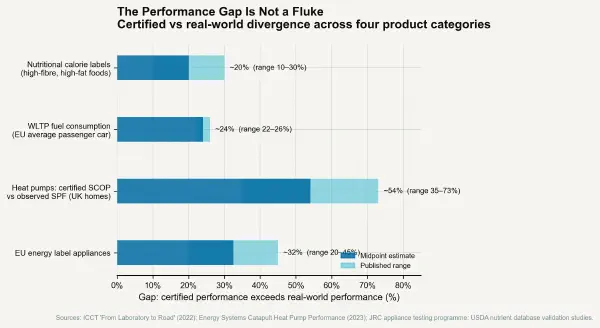

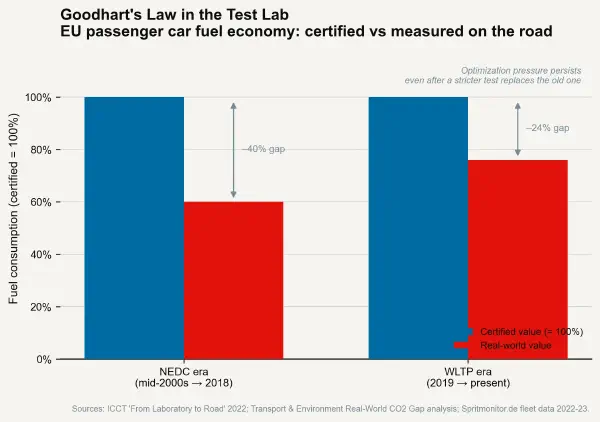

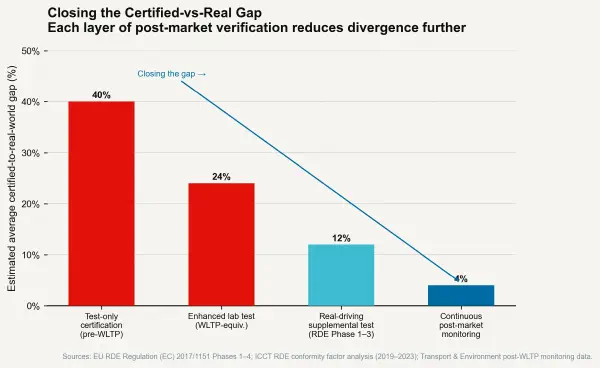

It is all of those things. It is also the logical endpoint of a specific incentive structure — the certification-by-proxy system — which, absent the explicit deception of the defeat device, produces the same directional outcome at lower severity across every regulated product category where compliance with a test is cheaper than compliance with the underlying objective.

The MPDI Framework#

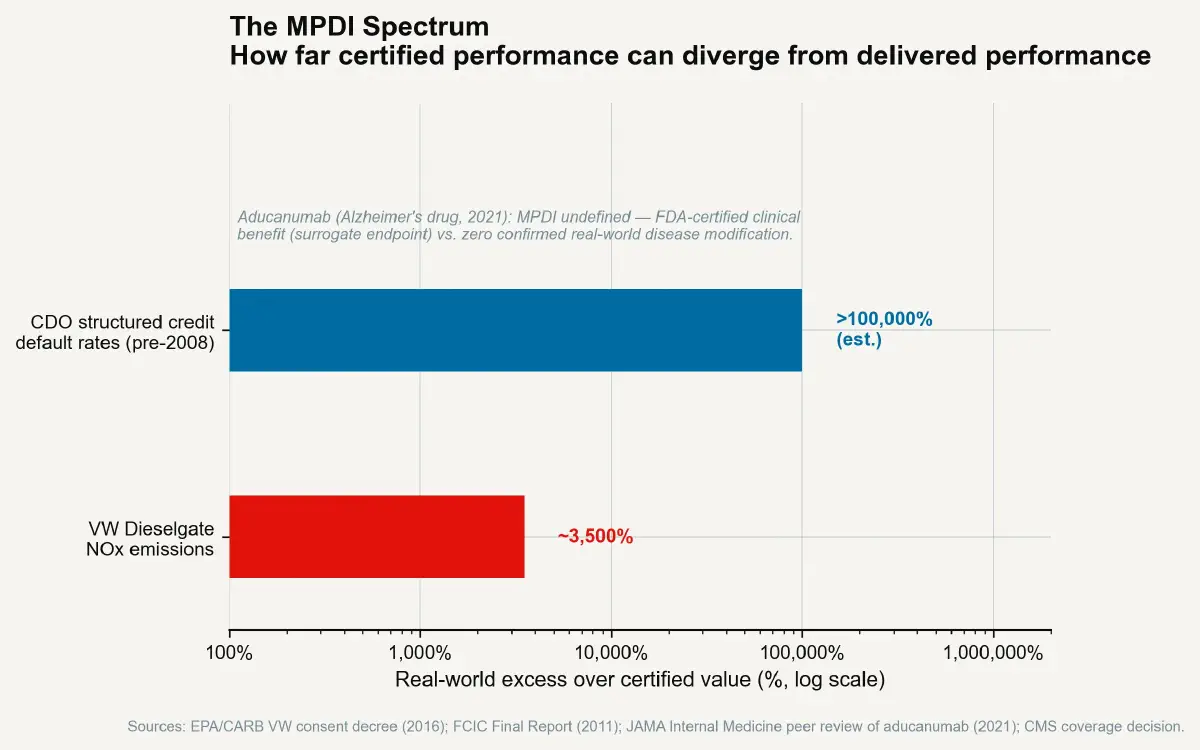

The Measurement-Performance Divergence Index (MPDI) is defined as: (Certified performance ÷ Real-world performance − 1) × 100. A MPDI of zero means the test accurately predicts real-world outcomes. A MPDI of 50% means the product performs 50% better on the test than in use — which means customers and regulators receive information that is 50% optimistic about the product's actual performance. A MPDI of 3,500% means the product performs 3,500% better on the test than in use. That is approximately the NOx MPDI for the VW 2.0-litre TDI defeat device in certain test configurations.

Expressing the VW scandal as a MPDI rather than simply as a fraud case illuminates its structure. The MPDI was not created in a single decision by a single executive. It was built incrementally over years, through a calibration process that involved dozens or hundreds of engineers, multiple management reviews of the test results versus real-world results, and a series of decision points at which the gap between the two could have been disclosed, corrected, or escalated. The defeat device was the mechanism that sustained an intolerable MPDI after the engineering team had concluded that the air quality targets and the performance targets were incompatible on a single engine calibration. The MPDI was the problem; the defeat device was the engineering solution to the problem of concealing it.

The MPDI Across Sectors#

Financial Credit Ratings at Pre-Crisis Levels#

The 2008 financial crisis provides the second most dramatic MPDI case study in recent history. Structured credit products — particularly collateralised debt obligations (CDOs) composed of mortgage-backed securities — were rated by the major credit rating agencies (Moody's, S&P, Fitch) using models that assigned AAA ratings to senior tranches of instruments that were composed principally of subprime residential mortgage assets.

The MPDI for these instruments — measured as (certified default probability ÷ actual ex-post default probability − 1) × 100 — was extreme. AAA-rated CDO tranches were certified as having default probabilities below 0.01% over a 10-year horizon (the AAA standard). In the 2007–2009 period, many of these instruments experienced 30–50% losses of principal value — representing actual default rates orders of magnitude above the certified level. The MPDI for structured credit ratings, measured against eventual losses, exceeded 1,000% for many specific instruments.

The mechanism was not a defeat device in the automotive sense — there was no intentional programming to distinguish rating-assessment conditions from real-world performance conditions. The MPDI arose from model assumptions: specifically, the correlation assumptions embedded in the Gaussian copula model used to assess the joint default probability of the mortgage pools underlying the CDOs. The model assumed relatively low default correlation between subprime mortgages from different geographic regions, because historical data on co-default was limited and the model was calibrated against a period without a nationwide housing price decline. When nationwide housing prices declined simultaneously across the US in 2007–2008, the correlation assumption was invalidated, and the certified performance of the instruments diverged catastrophically from actual performance.

The rating agency model was not secretly optimised to produce favourable ratings, in the way the Bosch engine management software was optimised to perform in test conditions. The rating agency model was simply wrong in ways that the rating agencies could not or would not acknowledge while the CDO issuance fees were substantial. The MPDI emerged from motivated reasoning and commercial incentive rather than explicit fraud. The result was approximately the same.

Pharmaceutical Clinical Endpoints and Surrogate Measures#

Drug approvals in the United States and European Union rely on clinical trial endpoints that are defined as measurable outcomes attainable within a trial period. For diseases with long natural histories — Alzheimer's disease, certain cardiovascular conditions, oncological outcomes — the ultimate clinical endpoint (patient mortality, functional independence, disease progression to late-stage symptoms) may require follow-up periods of 10–20 years that are not practical for trial timelines or commercial drug development economics. Regulatory agencies therefore accept surrogate endpoints — measurable short-term biomarkers that are assumed to correlate with the ultimate clinical outcomes.

The MPDI for surrogate endpoint drug approvals is a live and contested research question. A systematic review published in JAMA Internal Medicine (2021) examined 38 drugs approved by the FDA under accelerated approval pathways (which require only surrogate endpoint demonstration) and found that confirmatory studies — required by the accelerated approval process to subsequently establish efficacy on clinical outcomes — remained incomplete for 62% of drugs more than three years after accelerated approval. Of the studies that had been completed, a substantial fraction showed that the surrogate endpoint improvement did not translate into clinically meaningful outcome improvements.

The aducanumab approval for Alzheimer's disease (Biogen, 2021) was the most high-profile case: FDA approved the drug based on amyloid plaque reduction (a surrogate endpoint) over the objection of its own external advisory committee, which voted 10-0 against approval citing insufficient evidence that amyloid reduction translated into functional cognitive improvement. The subsequent real-world deployment at hospitals produced widespread adoption refusal among neurologists, and the drug was ultimately withdrawn from commercial marketing in 2024. The MPDI for certified clinical benefit versus real-world clinical benefit in this case was effectively infinite — the certified benefit was positive; the real-world clinical benefit was disputed to zero.

The Structural Generator of MPDI#

These three cases — VW emissions, structured finance ratings, pharmaceutical surrogate endpoints — differ in domain, regulatory structure, geographical context, and specific mechanism. They share a structural generator: in each case, certification compliance was cheaper than actual performance compliance, and the entity seeking certification had the capability to optimise for the former rather than the latter. The VW engineering team could compute the calibration that minimised test-cycle NOx. The CDO structurers could select the model assumptions that produced the preferred ratings. The drug sponsor could select the surrogate endpoint whose improvement was achievable within the trial window.

In none of these cases was the regulator passively unaware of the gap between certified and real-world performance — all three regulatory systems had known, documented, and discussed the limitations of their measurement approaches. The EPA had noted, in internal documents, concerns about the correlation between NEDC test-cycle results and real-world driving emissions. The credit rating agencies had received academic papers questioning Gaussian copula correlation assumptions in CDO models. The FDA had explicitly noted the limitations of amyloid plaque as an Alzheimer's surrogate endpoint. The regulators were not deceived. They were structurally constrained by the limits of their measurement apparatus and commercially or institutionally incentivised to continue using that apparatus.

The MPDI is not primarily a story about bad actors. It is a story about systems that consistently reward proxy compliance over real-world performance — and the next post examines the architecture of those systems in detail.