The Two-Man Rule and What It Actually Costs#

In 1962, following the unauthorised launch of a nuclear missile from a US Air Force base in Okinawa — an incident later confirmed by declassified documents — the Department of Defense implemented a codified set of procedural requirements for nuclear weapon use that would become known as the Two-Man Rule. The rule requires that any action affecting a nuclear weapon, from arming to release, must be performed by two authorised individuals present simultaneously and in visual contact with each other. Neither individual may perform any step alone. The procedure is intentionally inefficient: from a pure operational efficiency standpoint, a single trained operator could perform all required steps without meaningful risk of incompetence. The two-person requirement is not a technical necessity — it is a deliberate friction insertion.

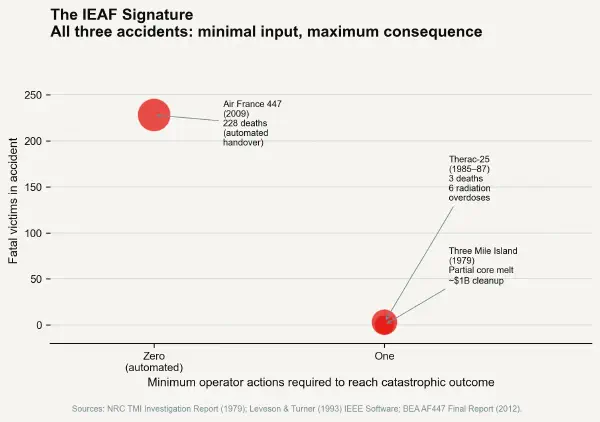

The Two-Man Rule is the purest existing example of protective friction as intentional interface design. The system is not difficult because it is badly designed. It is difficult because the difficulty is load-bearing. Every additional action step required to arm or launch a nuclear weapon is a barrier against unauthorised use, impulsive action, and single-point human failure. The IEAF of a nuclear launch system designed with the Two-Man Rule is, by design, as low as any engineered system's can be: the minimum action complexity to reach maximum consequence is maximised, because the entire purpose of the interface design is to prevent rapid, easy access to catastrophic outcomes.

Protective Friction as a Design Discipline#

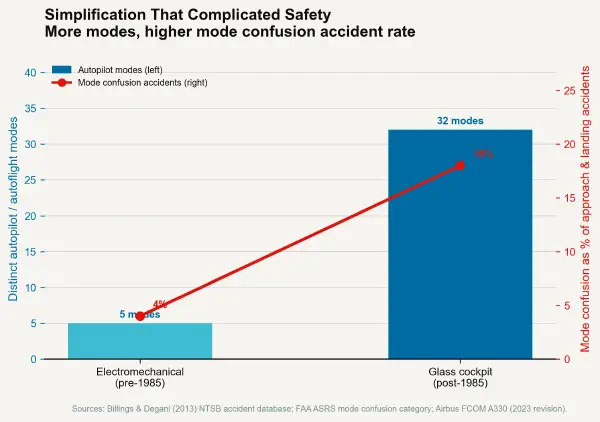

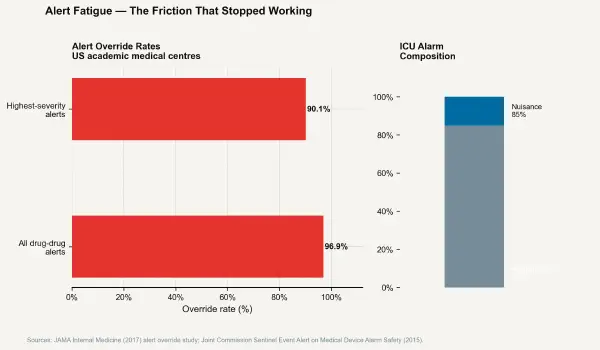

The safety engineering discipline of system design has long understood the concept of protective friction under various names — safety barriers (Reason, 1990), defence-in-depth (nuclear and aviation regulations), mandatory action complexity (human factors literature). What has not been systematically articulated across domains is the relationship between interface simplification trends and the erosion of those protections. The IEAF framework makes this relationship measurable: every design change that reduces minimum action complexity to reach a high-consequence state increases IEAF, and increasing IEAF is a safety-critical engineering decision whether or not it is recognised as one.

The practical design discipline of protective friction involves distinguishing between two categories of complexity in an interface. Incidental complexity — steps required by poor design, unclear labelling, unintuitive layout — reduces usability without providing protection. Protective complexity — steps required because the consequence of bypassing them is high — is load-bearing. Distinguishing between the two requires asking the question: what is the purpose of this friction? If the friction serves only to slow down a routine operation without providing a meaningful safety benefit, it is incidental and should be eliminated. If the friction exists as a barrier between a common operational state and a high-consequence state, removing it increases IEAF and should require explicit safety justification.

The Domains That Get This Right#

Financial Circuit Breakers#

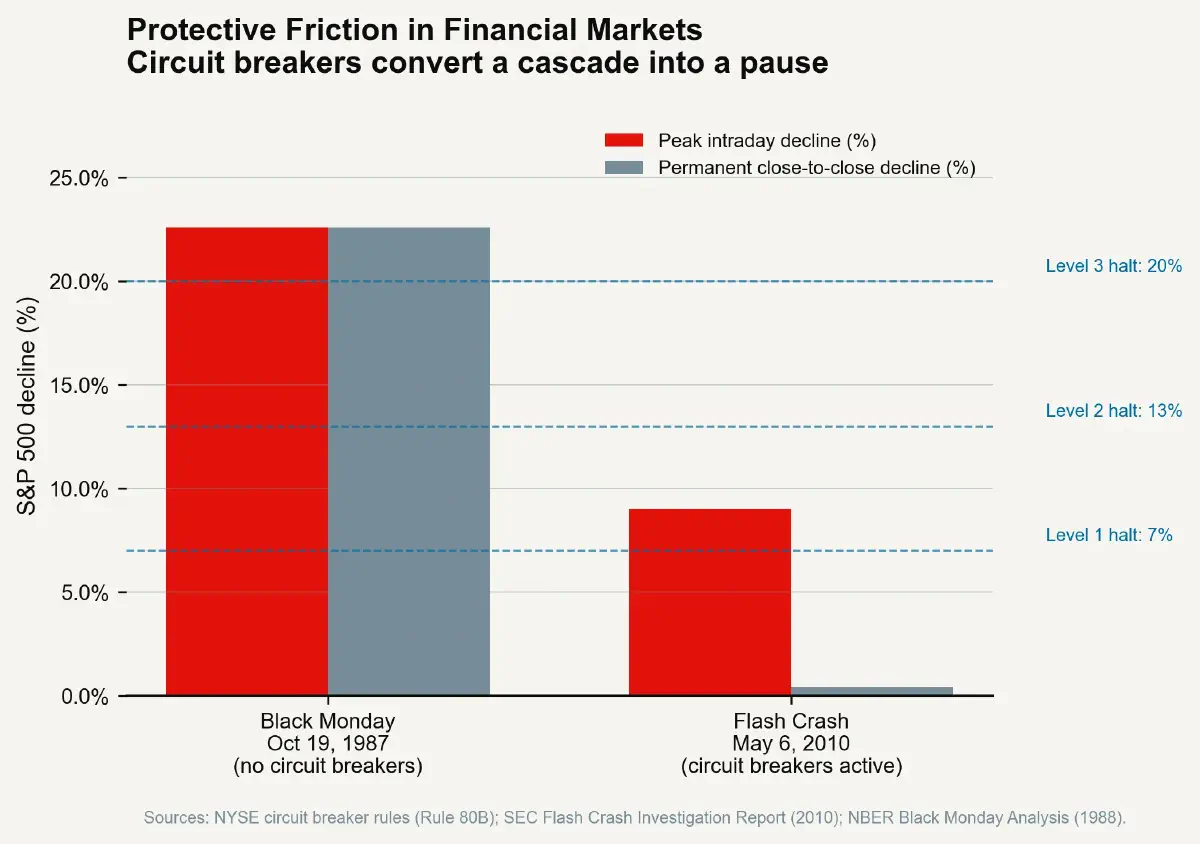

The US equity market circuit breaker system, implemented following the 1987 Black Monday crash and substantially reformed after the 2010 Flash Crash, provides one of the clearest examples of protective friction at infrastructure scale. The rules create mandatory trading halts — Level 1 (7% S&P 500 decline), Level 2 (13% decline), and Level 3 (20% decline) — during which all market activity is suspended for a defined period. Once triggered, trading cannot resume without the passage of time. The pause is not a request; it cannot be waived by any market participant, and no trade executed during a halt period is valid.

From a market microstructure perspective, circuit breakers are pure friction insertions — they interrupt the primary function of the market (continuous price discovery through voluntary trade) to prevent the cascade dynamics that produced, in 1987, a 22.6% single-day equity decline and, in 2010, a transient 9% decline followed by extreme volatility lasting approximately 36 minutes. The circuit breaker does not prevent the underlying market moves that trigger it. It provides a temporal friction barrier — mandatory cooling-off time — that increases the minimum action complexity for any market participant wishing to accelerate a downward cascade. The cascade can still happen; the escalating pause periods make sustained automated selling into a locked market physically impossible.

The IEAF of an equity market without circuit breakers is high: automated trading algorithms can cascade a minor liquidity event to a systemic consequence through a sequence of individually rational sub-millisecond actions. The circuit breaker mechanism does not make automated trading impossible — it inserts mandatory latency into the path from ordinary market stress to systemic consequence.

Nuclear Launch Protocol Safeguards#

The evolution of nuclear command and control systems from the early Cold War era to current protocols illustrates the deliberate layering of protective friction as threat environments became better understood. Early nuclear weapon systems had minimal procedural protections — the technical capability to arm and employ a weapon was concentrated in the physical possession of the weapon and the authorisation of the operational commander present. The 1960s and 1970s saw the systematic addition of coded switch systems (PALs — Permissive Action Links), which required the entry of an authorised code before arming was possible, combined with environmental sensing devices that disarmed weapons subjected to the physical shock profiles inconsistent with authorised delivery.

The structural IEAF logic of these additions is consistent: each layer of technical and procedural protection adds to the minimum action complexity of reaching weapon employment without adding to the complexity of authorised employment by a legitimate user following correct procedures. This asymmetry — increasing IEAF for unauthorised use while minimising IEAF for authorised use — is the design goal of protective friction architecture. It is also the goal that distinguishes protective complexity from incidental complexity. The PAL code entry adds complexity; it adds complexity only in the path from unauthorised to authorised employment.

Deliberate Confirmation Architecture in Software#

The software design pattern known as confirmation dialogs — "Are you sure you want to delete this file? This action cannot be undone" — is a ubiquitous consumer-facing example of protective friction that is regularly criticised in UX design literature as unnecessary friction and patronising to experienced users. The critique is not wrong in its narrower form: confirmation dialogs that fire on low-consequence actions (deleting a temporary file, closing a browser tab) are incidental friction and should be eliminated or at least made suppressible.

The critique becomes dangerous when generalised to all confirmation dialogs regardless of consequence. The deployment of Kubernetes infrastructure configurations — where a malformed file can destroy a production cluster serving millions of users — employs multi-step confirmation protocols including dry-run execution, difference displays, and explicit typed confirmation of the target cluster name. These are not patronising toward experts; they are appropriate IEAF management for an interface where a single malformed command can produce catastrophic irreversible consequences.

The discipline of designing protective friction in software systems has been formalised in security engineering as the principle of least authority and in production engineering as change management friction: mandatory peer review, approval chains, and canary deployment requirements before production changes. Each of these mechanisms increases the minimum action complexity required to change a high-consequence production system. Each represents a rational IEAF reduction strategy.

Measuring IEAF and Building Systems Below the Threshold#

The Interface Error Amplification Factor is not difficult to measure in practice, though it is rarely calculated explicitly. For any interface, the measurement protocol requires identifying the most severe consequence accessible through the interface, tracing the minimum number of discrete actions required to reach that consequence state, and calculating their ratio. Any system where the ratio exceeds a domain-appropriate threshold is a candidate for protective friction insertion.

The threshold is domain-specific: a consumer social media interface can tolerate higher IEAF for most functions because consequences are low. A clinical drug ordering interface, an aircraft autoflight mode selector, a nuclear control room, and a financial trading system require IEAF reduction even at the cost of operational efficiency. The appropriate design response is not to make the interface harder overall — it is to asymmetrically increase the minimum action complexity of the specific paths that lead to high-consequence states, while keeping routine operations efficient. This is what the Two-Man Rule achieves, what the circuit breaker achieves, what the PAL system achieves.

The simplification of interfaces is a legitimate and valuable design goal in most contexts. The error is treating simplicity as an unconditional virtue. In high-stakes domains, some paths should be long, some confirmations should be unavoidable, and some locks should require two keys. The IEAF metric is the tool that distinguishes protective complexity from incidental complexity — and makes the case, in engineering terms, that some friction deserves to be kept.