The Pilots Who Did Not Know What the Plane Was Doing#

In October 2013, a Cessna Citation jet operating as Sundance Air flight 701 crashed near Wichita, Kansas, killing all seven aboard. The National Transportation Safety Board's accident report noted that the captain, despite extensive flight experience, had accumulated limited time in the specific avionics suite of the aircraft and had demonstrated difficulty during proficiency checks with the flight management computer interface. In the minutes before the crash, as the aircraft entered uncontrolled flight, the captain's inputs were described as "inconsistent with the aircraft's actual flight condition." He knew how to fly. He did not know what his avionics were doing, or what they needed from him.

The same year, the crash of Asiana Airlines flight 214 in San Francisco — a Boeing 777 that struck a seawall during approach, killing three passengers — produced an accident report whose central finding was mode confusion in the autothrottle system. The crew had activated a combination of autopilot and autothrottle modes that caused the autothrottle to retard to idle during the approach. The crew did not notice until the aircraft was 34 feet above the runway threshold and decelerating below minimum approach speed. The autothrottle had been set; the expected mode was active on the display; the crew's working model of the aircraft's state was wrong. Asiana 214 is now a canonical case study in what aviation safety researchers call automation surprise — the IEAF failure mode of the glass cockpit era.

The Glass Cockpit Raised IEAF in the Wrong Dimension#

The transition from electromechanical flight instruments to glass cockpit avionics in commercial aviation began in the 1980s with the Boeing 757/767 and Airbus A310, and was substantially complete in new aircraft deliveries by the late 1990s. The change was motivated by compelling benefits: reduced panel clutter, weight savings, improved legibility, automatic cross-checking of sensor data, and — most importantly for operational economics — the ability to automate repetitive tasks through the flight management system (FMS), reducing workload on long-haul segments and enabling smaller flight crew complements. The glass cockpit delivered these benefits, without question. The fatal drawback of the glass cockpit, equally real and far less often stated, is that it dramatically increased the number of discrete operational modes the aircraft can occupy and reduced the sensory cues available to distinguish between them.

The Interface Error Amplification Factor for a glass cockpit can be measured in the mode-confusion regime: the minimum number of distinct button presses required to place the aircraft in a specific autoflight state (low — as few as two or three), divided by the cognitive steps required to reliably know which autoflight state the aircraft is in (high — mode displays are small, acronym-dense, and show 4–8 concurrent active modes simultaneously). The ratio is unfavourable for safety. A modern glass-cockpit autoflight system can occupy dozens of distinct operational modes — each governing different combinations of automated altitude tracking, speed tracking, heading tracking, and envelope protection — and the mode display that communicates the current state shows abbreviated labels that even experienced pilots misread under workload.

The Mode Confusion Architecture#

How Modes Multiply Beyond Cognitive Management#

The Airbus A330/A340 autoflight system has over 30 distinct flight guidance modes, each associated with different rules for how the aircraft responds to control inputs and external conditions. The Boeing 777 FMS has a comparably complex state space. The interface for managing these modes evolved from the principle of maximum automation: each additional mode was added to handle a specific scenario more efficiently or safely than manual flight. The collective result of adding modes for thirty years is a system whose state space exceeds the working memory capacity of most humans operating under moderate workload.

The Airbus FCOM (Flight Crew Operating Manual) for the A330 runs to approximately 1,500 pages. A significant fraction of that documentation describes the conditions under which mode transitions occur, which conditions trigger reversions to different modes, and how the mode display should be interpreted. The documentation is not read in flight. It is studied in training simulators over months of ground school and practised in recurrent proficiency checks. The expectation is that pilots will have internalised the system's state-transition logic to the degree that they can interpret display indications correctly under workload and fatigue. The accident record suggests this expectation is not always met.

The Bureau d'Enquêtes et d'Analyses (BEA) analysis of AF447 found that the crew had not received adequate training in alternate law handling — the degraded flight control mode triggered by the sensor failure. This was not because the training did not exist; it was because alternate law situations are rare enough that recurrent training tends to minimise their emphasis relative to more likely scenarios. The system's full complexity was accessible but was rarely exercised. The first time the AF447 crew encountered a full-stall scenario at altitude under alternate law was in the accident itself.

The MCAS Architecture as IEAF Extreme#

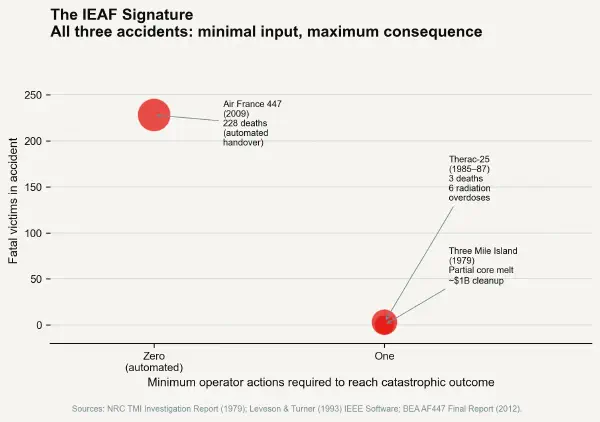

The Boeing 737 MAX MCAS (Manoeuver Characteristics Augmentation System) failure presents the most extreme IEAF calculation possible: the ratio of consequence to minimum action complexity approaches infinity, because the minimum operator action required to initiate the process leading to catastrophic outcome was zero.

MCAS was an automated pitch trim system installed on the 737 MAX to compensate for the forward-shifted thrust line of its larger LEAP engines. When the aircraft's angle-of-attack (AoA) exceeded a threshold, MCAS activated and trimmed the horizontal stabiliser nose-down. The system was designed to activate without crew command and was not — in the initial 737 MAX certification — documented in the crew's flight manual. Pilots of Lion Air 610 in October 2018 and Ethiopian Airlines 302 in March 2019 encountered MCAS activation triggered by a single malfunctioning AoA sensor and responded with control inputs that were correct for what the flight manual told them the aircraft was — but inconsistent with what MCAS was doing.

The IEAF of MCAS as designed was structurally problematic beyond its specific electronic failure. The system had a single-sensor input with no cross-checking, no override confirmation required from the crew, and a sequential activation that could repeat at 5-second intervals. Any malfunction of the single AoA sensor could trigger potentially unrecoverable trim movement with zero crew action. The design simultaneously minimised action complexity for activation and maximised consequence severity. Under the IEAF framework, this is not an accident. It is the formal definition of a high-IEAF system.

What the Accident Data Shows About Mode Confusion#

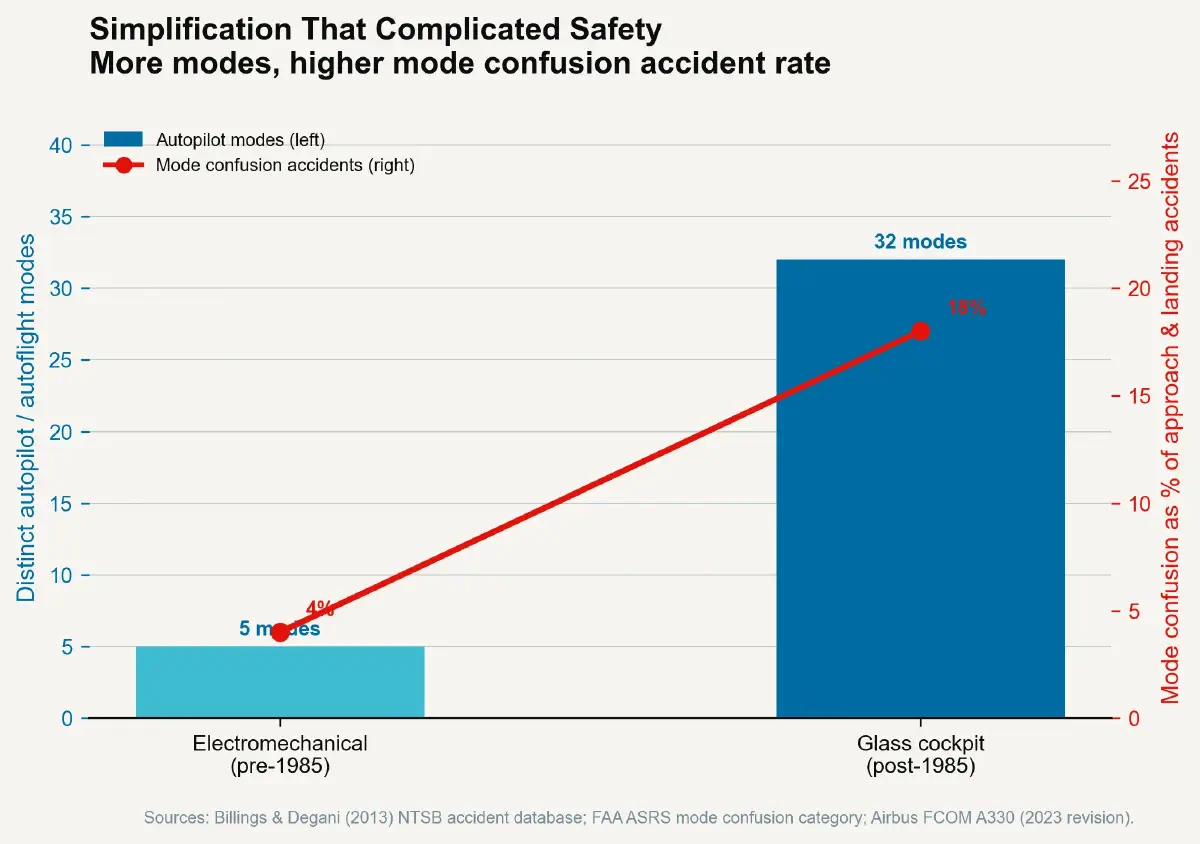

The FAA's Aviation Safety Hotline and ASRS (Aviation Safety Reporting System) databases contain thousands of reported events involving mode confusion — situations where pilots were uncertain of the active autoflight mode, performed mode selections inconsistent with intended flight path, or experienced unexpected mode reversions. A 2013 study by Billings and Degani, reviewing accident reports from the NTSB and international equivalent agencies, attributed approximately 18% of approach and landing accidents involving glass-cockpit aircraft to mode confusion as a contributing or causal factor.

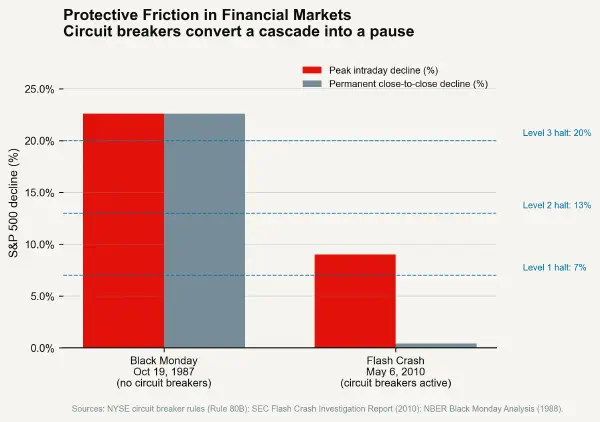

The comparable statistic for the electromechanical panel era (pre-1985) is difficult to construct because mode confusion was not a recognised accident category — electromechanical autopilots had fewer modes and their state was physically indicated by visible lever positions and mechanical switches rather than software flags displayed on LCD screens. The physical state of an electromechanical system is harder to misread than a software status register. This is the asymmetry at the heart of the touchscreen cockpit's IEAF profile: physical controls that take discrete positions have higher minimum action complexity to change (you must move a physical object) and lower confusion potential (the position is directly visible). Software controls that change state in response to menu selections have lower minimum action complexity and higher confusion potential — exactly the wrong trade-off for a high-consequence operational environment.

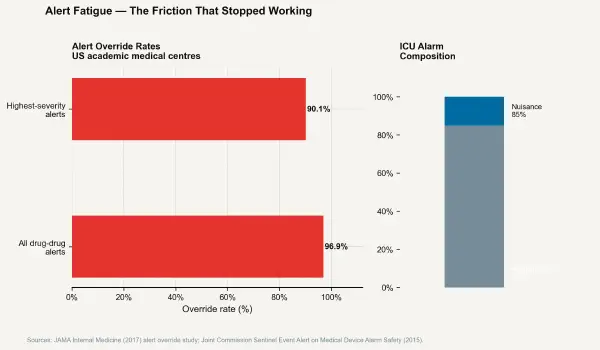

The Partial Response: Procedure as Friction Substitute#

The aviation industry's primary response to mode confusion and automation surprise has been to add procedures — checklists, verbal callouts, cross-checking protocols, and simulator exercises specifically designed to build mode-management skill. This response addresses the symptom without addressing the source: the IEAF of the underlying interface is unchanged. The procedures layer protective friction on top of a high-IEAF system by requiring additional cognitive steps before certain mode selections are finalised.

Some of these procedural additions are effective in normal operations. They are least effective under the high-workload, time-compressed conditions where mode confusion matters most. When two pilots are managing an aircraft in deteriorating weather with ATC communications ongoing, the checklist procedure that was practised in a calm simulator environment becomes another competing demand rather than a reliable friction barrier.

The aviation industry understands this tension and has begun addressing it through interface redesign — larger, more legible mode displays; more systematic use of colour coding to indicate mode status; improved autoflight annunciators that describe what the system is doing in plain-language format rather than acronym abbreviations. These are incremental reductions in IEAF at the display comprehension layer, not at the mode activation layer. The next post examines a domain where the IEAF problem is arguably more acute and far less well-managed: electronic health record interfaces in clinical medicine.