Two Switches and a Catastrophe#

At 4:00 a.m. on March 28, 1979, a maintenance technician at the Three Mile Island nuclear generating station in Pennsylvania performed a routine resin-cleaning operation on the secondary coolant loop. A pair of feedwater pumps lost flow, as designed in the procedure. The reactor's turbine tripped, as designed. A relief valve opened on the primary system to reduce pressure, as designed. The valve then failed to close — and the back-lit indicator on the control panel told the operators that it had. The operators believed the valve was shut because a light said it was shut. The light was wired to the valve's actuation solenoid, not to the valve's physical position. The solenoid had de-energised; the valve was stuck open. For two hours and twenty minutes, the operators actively added to the emergency by following the logic of the indicator rather than the logic of the system it was incorrectly representing.

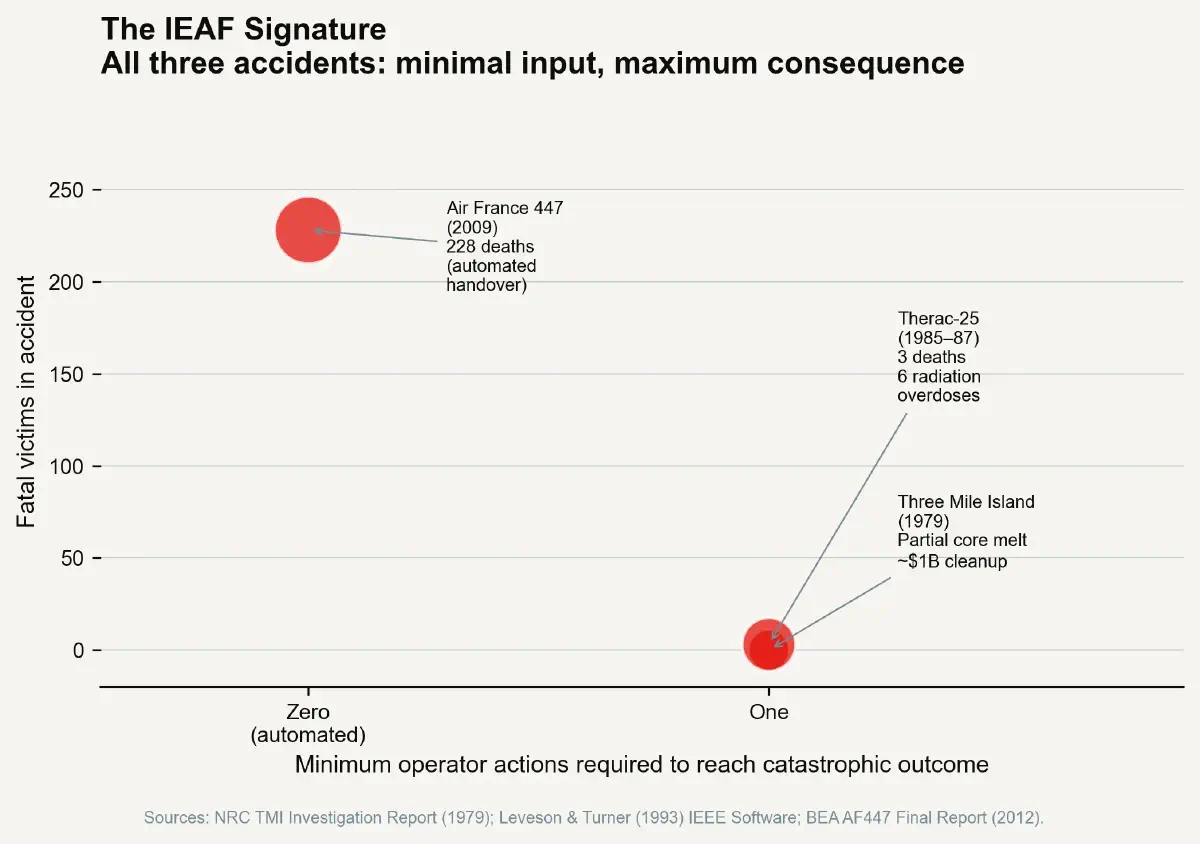

The most consequential nuclear accident in American civilian history was initiated not by a massive explosion, a catastrophic systems failure, or a human decision to do something dangerous. It was initiated by a feedwater pump trip — a routine, designed response — and extended by an indicator light telling operators something that was not true. The minimum operator action to reach maximum consequence was: trust the panel display. That is the Interface Error Amplification Factor made physical.

Every Complex System Has an IEAF Profile#

The Three Mile Island accident, the Therac-25 radiation overdoses, and the Air France 447 crash are three of the most dissected disasters in the literature of human factors and systems safety. Each is cited in engineering education, in flight safety curricula, in medical device regulation, and in risk management frameworks. Each is treated as a unique specimen of its era, its technology, and its regulatory environment. What they share — the mechanism that unites them beneath their surface differences — is rarely stated with the precision it deserves: each produced catastrophic outcomes by routing a small, simple, common action to an extreme consequence through an interface that had been designed, in the name of usability, to reduce the complexity of reaching operational states. The Interface Error Amplification Factor (IEAF) — maximum consequence severity divided by minimum action complexity to reach that consequence — is not a failure mode of bad design. It is an emergent property of systems designed to be easy to use.

The IEAF Case Studies#

Three Mile Island: The Light That Was Not the Valve#

The TMI control room of 1979 was not an obsolete or poorly designed facility by the standards of its era. It had been built according to US Atomic Energy Commission and NRC guidelines, reviewed by qualified human factors engineers, and staffed by licensed reactor operators. The panel arrangement had been optimised to present critical system states in a legible visual format. The optimisation, however, conflated ease of comprehension with accuracy of indication. The pilot-operated relief valve (PORV) indicator lamp was wired to the easiest instrumentation point — the solenoid command signal — rather than to a direct valve position sensor. Fitting a direct valve position indicator would have added cost, required additional piping penetrations, and introduced its own potential reliability concerns. The shortcut was reasonable in isolation.

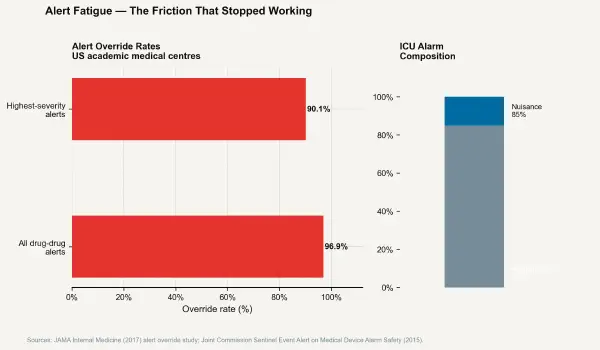

In operation, the shortcut created an IEAF approaching the theoretical maximum: the single most common routine operator act (trusting a status indicator that appears off-nominal and shows normal) led directly to the sustained primary coolant loss that produced partial core melt. The NRC's post-accident investigation documented 438 alarms activating in the two-hour window of the event — operators were in a state of sustained sensory overload, and the most critical piece of information in the entire facility was being delivered by a lamp wired to the wrong component.

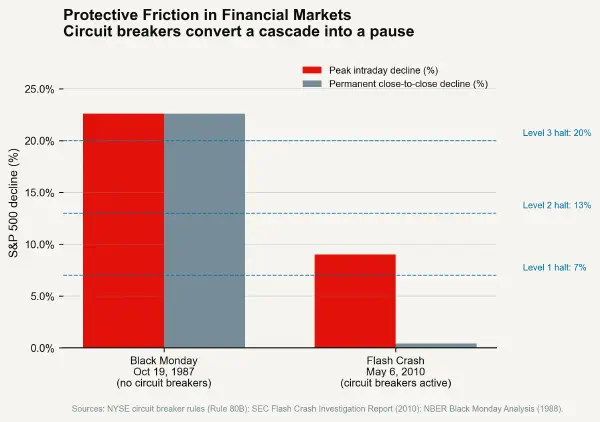

The TMI legacy produced US nuclear industry reforms that directly addressed IEAF, though the term did not yet exist. The post-TMI NRC requirements for upgraded control room human factors engineering mandated direct valve position indication, expanded emergency operating procedures, and crew resource management training drawn from aviation practices. These reforms deliberately inserted action complexity — additional verification steps, independent confirmation procedures — between operators and critical system states. They were, in the vocabulary of human factors engineering, friction insertions. They made it harder to act on a single indicator without cross-checking. They raised the minimum action complexity required to reach a dangerous operational state, and the post-TMI civil nuclear safety record in the United States reflects the benefit.

Therac-25: The Race Condition That Became a Lethal Dose#

Between June 1985 and January 1987, the Therac-25 medical linear accelerator system administered at least six massive radiation overdoses at treatment facilities in the United States and Canada. Three patients died from radiation injuries. The overdoses ranged from 40 to over 100 times the prescribed therapeutic dose. The Therac-25 was a software-controlled upgrade to earlier hardware-interlocked models (the Therac-6 and Therac-20), and its design reflected a deliberate decision to remove the hardware safety interlocks that had made those earlier models heavier, more expensive, and slower to operate.

The specific failure mode involved a race condition in the treatment mode selection software. When an operator entered a treatment setup and then quickly edited the mode selection — a common workflow shortcut used by experienced operators to save time — the display could show one treatment mode (the low-dosage X-ray mode) while the machine was actually configured for the high-dose electron beam mode. If the operator then initiated treatment before the software state caught up with the display state, the full electron beam was delivered through a configuration designed only for low-dose X-ray — with no hardware safety system present to interrupt it.

The IEAF of the Therac-25 in this failure mode was extreme: a single fast keystroke edit — an action so routine that experienced operators performed it dozens of times per day — could route the patient to a lethal overdose. The action complexity required to reach maximum consequence was not merely low; it was lower for the fatal configuration than for normal operation, because the fatal path was the result of the more efficient workflow the machine's software had been designed to facilitate. Speed — the reduction of operational friction — was the direct enabling factor.

Nancy Leveson's analysis of the Therac-25 remains the definitive account of software-mediated safety failures and is the foundational case study of software safety engineering. Its central lesson is not about software quality control — though that was implicated. It is about the removal of hardware interlocks that had been, in the language of systems safety, the blocking barriers between a common operator action and a lethal outcome. The hardware interlocks had high IEAF, in that their removal made the interface simpler to use. They had extremely low IEAF protection — their presence made it physically impossible to reach the fatal state through any sequence of software commands. The Therac-25 replaced physical impossibility with software logic. Software logic has race conditions.

Air France 447: The Autopilot That Handed Back an Aircraft#

On the night of June 1, 2009, Air France flight 447 departed Rio de Janeiro bound for Paris carrying 228 passengers and crew. At approximately 2:10 a.m. UTC, cruising at 35,000 feet over the Atlantic, the aircraft's pitot tubes — air speed sensors — became blocked by ice crystals during a traverse of a deep convective cell. The autopilot disconnected. The autothrust disconnected. The aircraft entered alternate law — a degraded flight control mode in which the fly-by-wire envelope protections that prevent the aircraft from entering aerodynamic stall are no longer active. The crew had 4 minutes and 24 seconds to understand what had happened and fly the aircraft before it hit the ocean.

They did not. The aerodynamics of AF447's final 3 minutes and 30 seconds — a sustained full stall at 35,000 feet, with all three pilots in the cockpit — has been analysed in exhaustive detail. What the accident report ultimately surfaces is an IEAF problem in the mode transition architecture of the flight management system. The normal law to alternate law transition — triggered automatically by the airspeed sensor failure — produced a cockpit state that was ambiguous, alarm-saturated, and physically counterintuitive given the crew's trained expectations of how the aircraft behaved. The pilots were not ignorant; they were trained on the autopilot-active regime and had limited practice responding to a full stall at altitude in an aircraft whose flight envelope protections had reverted. The transition from easy-to-use (autopilot, envelope protections) to genuinely difficult (alternate law, raw data flying, 35,000-foot stall recognition) required zero inputs from the crew. The aircraft demoted itself.

The Pattern Beneath the Three Cases#

The TMI indicator lamp, the Therac-25 keystroke shortcut, and the AF447 mode transition are not isolated engineering failures. They are three instances of the same structural pattern: interface simplification that reduced the friction between a routine state and a catastrophic one. In each case, the redesign or design choice that produced the failure was motivated by legitimate engineering rationale — cost reduction, operational efficiency, cognitive load management. In each case, the unintended consequence was a reduction in the action complexity separating the operator from maximum consequence. The IEAF rose because the minimum path to the worst outcome was shortened.

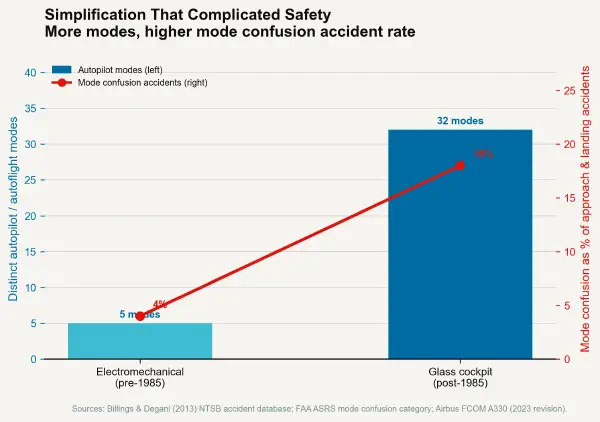

This pattern does not speak against simplicity in interface design. It identifies the specific form of simplicity that is dangerous: not simplicity in the routine operating regime, but simplicity in the path from routine to catastrophic. The next post examines this problem in its most visible current form — the transition from electromechanical to digital cockpit interfaces in commercial aviation, and what the accident record reveals about IEAF in the mode-confusion regime.