The Orange Glow in the Dark#

In April 1937, Frank Whittle ran his first successful test of the W.1 turbojet engine at a factory in Rugby, England. The test, which followed two years of failed attempts and near-constant compressor surging, lasted just 20 minutes before a fuel valve was shut down. In that 20 minutes, two things became immediately apparent to the engineers present. The first was that the jet engine worked — that the sustained thrust of a turbojet was a practical proposition, not a theoretical curiosity. The second was that the turbine blades were glowing. Not hot in the way that metal glows under a blacksmith's hammer — but orange, visibly, in the middle of a working engine, radiating heat at temperatures that were measurably above the rated operating limit of the alloy used to make them.

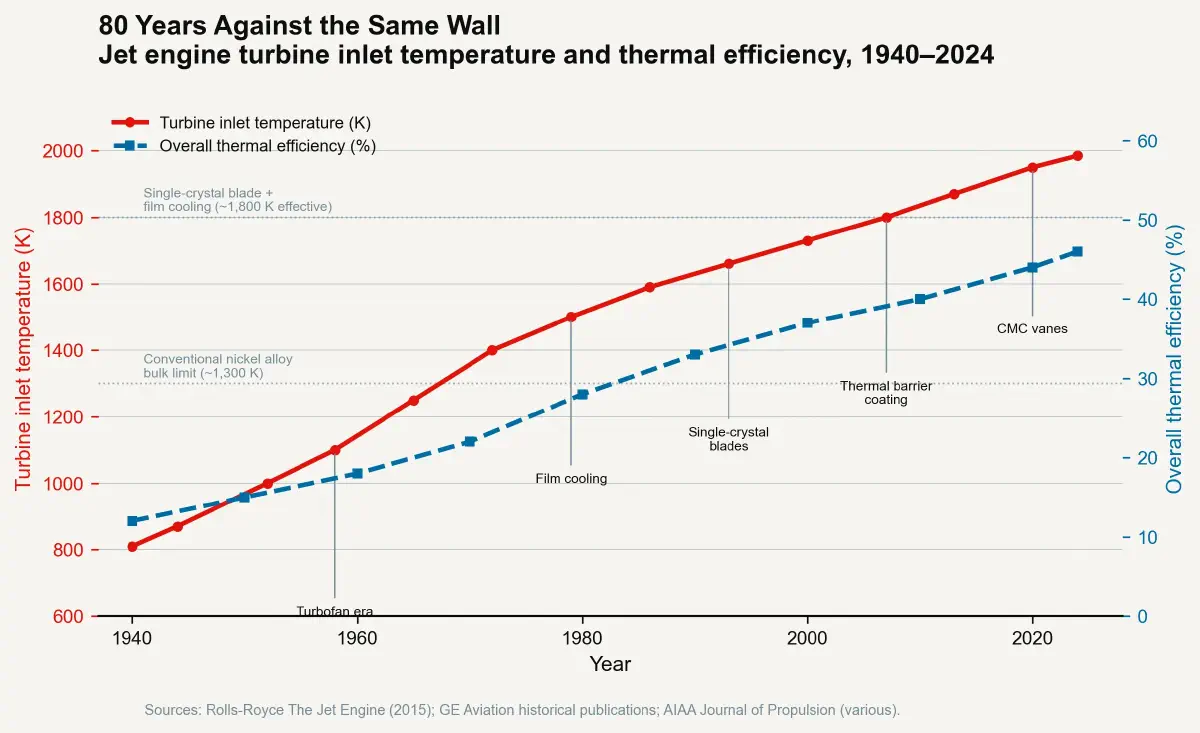

The story of jet engine development over the following eight decades is the story of that luminous constraint: an engineering civilisation's sustained, expensive, remarkably successful attempt to make turbines operate at temperatures above the melting point of the metal they are made from. The jet age — its fuel efficiency, its intercontinental range, its economic accessibility — is not primarily a story of aerodynamic refinement or digital avionics. It is a story of heat management at extreme temperatures. And the dominant remaining variable in aviation's future efficiency gains is a thermal margin of perhaps 50–100 degrees Kelvin.

Heat Is the Master Variable in Thermodynamic Efficiency#

The central asymmetry of the jet engine is not accidental — it is written into thermodynamic law. The Brayton cycle, which governs the thermodynamic performance of a gas turbine, has a thermal efficiency that is a direct function of the temperature ratio across the cycle. In the simplified ideal Brayton cycle, thermal efficiency scales as $1 - T_{low}/T_{high}$ — the higher the temperature at which energy is added to the working fluid, and the lower the temperature at which it is rejected, the more of the fuel energy is converted to useful work rather than waste heat.

For a jet engine, the practical governing variable is the Turbine Inlet Temperature (TIT) — the temperature of the combustion gas immediately entering the high-pressure turbine stage. Every kelvin increase in TIT increases thermodynamic efficiency and allows more thrust to be extracted from a given fuel flow. The aviation industry has spent 80 years maximising TIT. The TDR for turbine development is the ratio of thrust and efficiency gain per kelvin of TIT increase, divided by the infrastructure cost to achieve and maintain blade integrity at that temperature. Understanding this ratio requires understanding what it takes to keep a blade alive at temperatures no alloy survives without assistance.

Inside the Materials Arms Race#

The Temperature Progression and Its Engineering Cost#

The first operational jet engines of the Second World War era — the Rolls-Royce Welland, the General Electric J31, the Junkers Jumo 004 — operated at TITs of approximately 1,000–1,100 K (730–830°C). The inlet temperature was limited by the nickel and cobalt alloys available at the time. Wrought nickel alloys of the 1940s began to lose structural integrity above approximately 700–750°C under the centrifugal stresses of turbine rotation. The blade design was conservative by necessity, and the early engines had operational lives measured in tens of hours before blade replacement was required.

The first major advance came through alloy development. Between the 1950s and 1970s, the introduction of directionally solidified nickel superalloys — cast such that the grain boundaries of the metal were oriented parallel to the blade's centrifugal stress axis, removing the transverse grain boundaries that were the primary failure initiation sites — raised practical TIT limits to approximately 1,200–1,300 K. Engine lifetimes extended to thousands of hours.

The second major advance was single-crystal nickel superalloy blades, developed through the late 1960s and commercialised in the 1980s by Pratt & Whitney, Rolls-Royce, and GE Aviation. A single-crystal blade, manufactured by directional solidification processes that produce a single continuous crystal lattice throughout the entire blade volume, has no grain boundaries at all. The catastrophic high-temperature failure modes of polycrystalline metals — grain boundary oxidation, grain boundary creep — are eliminated by eliminating grain boundaries. Single-crystal blades entered service in civil aviation in the Pratt & Whitney PW2037 (1984) and GE CF6-80C2 (1985), enabling sustained TITs of approximately 1,600–1,700 K — above the solidus temperature (onset of melting) of the alloy itself.

The engine was now operating at temperatures that would destroy the blade material — without internal cooling, the blades would liquefy within seconds of full-power operation. The cooling system built into the blade is what makes this possible.

The Internal Cooling Revolution#

The cooling of modern turbine blades is an extreme precision manufacturing achievement that rarely appears in discussions of jet engine technology. A production high-pressure turbine blade in a current-generation civil engine — a GE LEAP, a Rolls-Royce Trent XWB — contains an internal network of cooling channels through which compressed air, bled from the compressor stage, flows continuously during operation. The air exits through arrays of film cooling holes on the blade surface, creating a thin boundary layer of cooler air between the blade metal and the 1,900K+ combustion gas stream. Without this film, the blade surface would see the full gas temperature; with it, the metal temperature is maintained at approximately 1,100–1,200K — below the structural limit of the alloy.

The dimensional precision required for these internal channels is among the tightest in any manufactured product. Channel wall thicknesses of approximately 0.5–1.0 mm are maintained throughout intricate three-dimensional internal geometries, manufactured by investment casting around ceramic rod cores that are chemically leached out after casting. Positional tolerances for cooling hole arrays — laser-drilled or EDM-drilled through the blade surface into the internal channels — are held to ±0.05–0.10 mm. Any deviation significant enough to block a cooling channel or thicken a film cooling hole land can produce a local hot spot sufficient to initiate oxidation-accelerated creep, which progresses to blade failure within operating hours.

The addition of Thermal Barrier Coatings (TBCs) in the 1990s added the final layer of thermal protection. A TBC — typically yttria-stabilised zirconia (YSZ) applied by electron-beam physical vapour deposition at thicknesses of 100–200 µm — reduces the ceramic surface temperature seen by the metallic substrate by approximately 100–150 K under operating conditions. The coating is not structural; it is a sacrificial thermal insulating layer that extends component life between scheduled maintenance intervals. Its degradation by sintering (at sustained high temperature), by CMAS (calcium-magnesium-alumino-silicate) particulate ingestion from volcanic ash and dust, and by thermal cycling stresses during engine start and shutdown is one of the primary drivers of high-pressure turbine blade maintenance intervals across the civil aviation fleet.

The 850 Kelvin Gain and Its Limits#

The cumulative result of 80 years of alloy development, internal cooling innovation, and thermal barrier coating technology is a TIT increase from approximately 1,100 K in 1950s first-generation engines to approximately 1,950 K in current-generation high-bypass turbofans. That increase of approximately 850 K is, in terms of its contribution to aircraft fuel efficiency improvement, the dominant single engineering variable in the history of commercial aviation. It is larger in efficiency impact than the bypass ratio increase from early turbojets, larger than the aerodynamic improvements in wing and nacelle design, and larger than the weight reduction from composite structures.

The efficiency gains that make long-haul air travel economically accessible — the 40–50% improvement in fuel consumption per seat-kilometre between 1970s-era wide-body aircraft and current-generation LEAP and GEnx-equipped aircraft — trace primarily to this thermal trajectory.

The question now is how much further the trajectory can run. The answer is: not much further, on the current materials basis. The industry TIT roadmaps for the 2030s target incrementally higher temperatures in the 2,000–2,050 K range, but achieving these requires transitioning from metallic superalloy turbine blades to ceramic matrix composites (CMCs) — specifically silicon carbide fibre-reinforced silicon carbide (SiC/SiC) composites. CMC components have a structural temperature ceiling roughly 200–300 K above the best nickel superalloys, enabling TITs in the 2,100–2,200 K range without the blade cooling air consumption that robs efficiency in current designs.

The Thermal Wall Is Also the Opportunity#

GE Aviation introduced CMC high-pressure turbine shrouds in the LEAP engine in 2016 and has since deployed CMC high-pressure turbine blades in the GE9X engine family, which powers the Boeing 777X. The manufacturing challenges of CMC components — fibre weaving, infiltration of ceramic matrix material, surface coating compatibility, and inspection — are formidable, and production yields have historically been lower than for metallic castings.

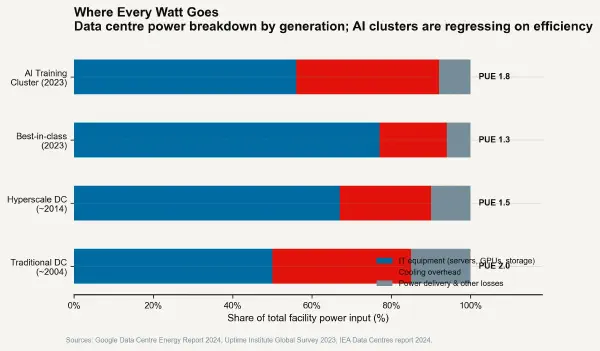

The data-centre post of this series noted that the TDR inflection for AI cooling was crossed at the transition from air to liquid cooling. The turbine equivalent — the transition from metallic to ceramic blade materials — represents a similar inflection point for aviation efficiency. On the near side of it, incremental alloy refinements and coating improvements yield diminishing TDR returns. On the far side, the 200–300 K temperature headroom of CMC materials enables the next step of efficiency gain.

The TDR for modern turbine development, expressed in terms of the infrastructure cost to add one kelvin of sustained TIT capability, has been rising steeply as the nickel superalloy system approaches its limits. The engineering capital now required to add a single degree of TIT to a production engine — in alloy qualification, casting process development, cooling redesign, and durability validation — is measurably higher than it was when TIT was at 1,400 K and the material system had headroom. This is the fundamental thermal constraint of aviation: the marginal cost of extracting more efficiency through temperature is rising, and the CMC transition is the only credible route around the wall.

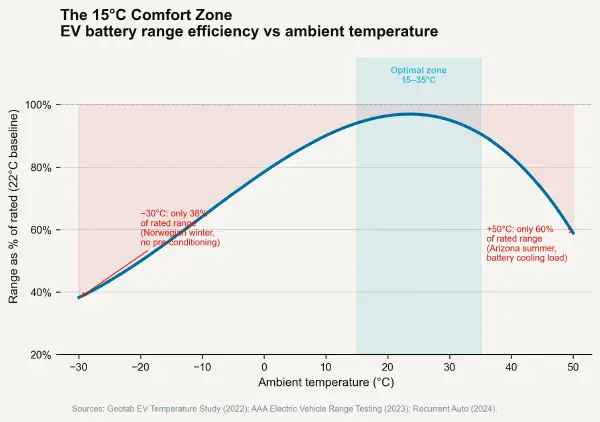

The cooling constraint is the same across every domain examined in this series — silicon physics in post one, facility infrastructure in post two, battery electrochemistry in post three, and turbine metallurgy here. In each case, the physical limits of heat management define the boundary of achievable performance more completely than any other engineering variable. The TDR is not a metric that describes a secondary operational concern. It describes the frontier of what is possible.