The Specification That Nobody Had Read in Full#

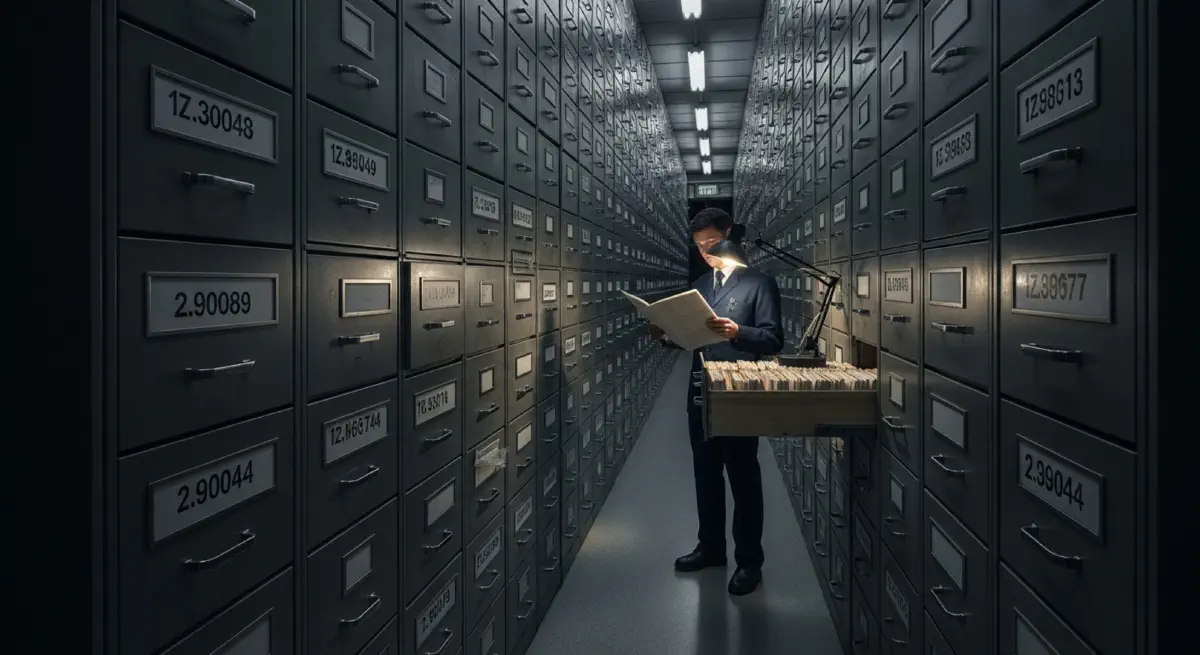

The Space Shuttle vehicle had approximately 2.5 million parts. Its formal specification documentation — the full set of requirements, interface control documents, maintenance procedures, and safety analyses governing every component of the orbiter, solid rocket boosters, and external tank — ran to approximately 33,000 distinct documents. No individual, and no team, had read them all. No computer system maintained a searchable cross-reference of all interdependency relationships between these documents. The program's configuration management process — theoretically, the system that ensured all changes to one document were propagated to all affected documents — was acknowledged in the Columbia Accident Investigation Board's 2003 report to have been operating against backlogs of thousands of unresolved discrepancy reports at the time of the accident.

The Columbia accident on February 1, 2003 — the disintegration of the orbiter on re-entry after foam debris from the external tank struck the leading edge of the left wing during launch — killed all seven crew members and grounded the Shuttle program for two and a half years. The CAIB found that the physical mechanism of the accident was a compromised thermal protection system tile. The CAIB found that the systemic cause was an institutional failure — a complex of management decision processes, communication structures, and risk normalisation patterns that had developed over two decades of Shuttle operations in ways that made the accident, in the CAIB's assessment, "not surprising but rather predictable."

The CAIB report's most quoted passage describes what the Board called "organisational silence": the inability of information about potential risks to travel from the technical workforce to the management decision-makers through a complexity of communication layers, management filters, and institutional incentive structures that effectively prevented the transmission of bad news. The 33,000-page specification was not the cause of this silence. It was the visible expression of the system complexity that made such silence structurally inevitable.

The CRIP and Its Measurement#

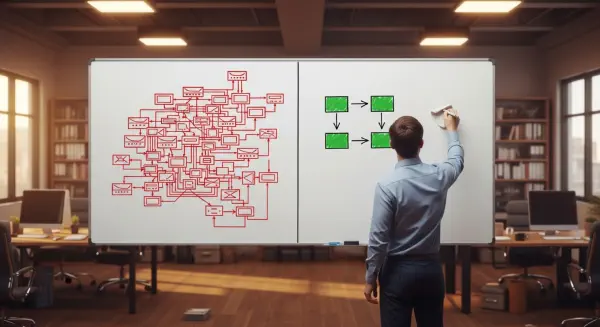

The Complexity-Reliability Inversion Point is the level of system complexity at which the marginal failure modes added by each additional unit of complexity exceed 1.0 — meaning each additional feature or interface generates more than one new failure mode. Below CRIP, complexity and reliability can grow together: each feature adds capability and the incremental failure risk is manageable. Above CRIP, the system has more potential interaction paths than the engineering and operational processes can track, analyse, and protect against.

CRIP is not a fixed number. It is a function of the analysis and management processes surrounding the system. A highly capable failure mode and effects analysis (FMEA) process, a mature configuration management system, and a well-resourced safety review board can sustain a higher absolute complexity before CRIP is crossed, because they are processing the complexity adequately. The same system with a degraded safety review process, an underfunded FMEA, or a backlogged configuration management system will cross CRIP at lower absolute complexity. This means CRIP is as much an institutional variable as an engineering one: it is the intersection of system complexity with the complexity-management capacity of the organisation operating it.

The Shuttle program's CRIP had been crossed before Columbia. The Challenger accident in January 1986 — caused by failure of an O-ring seal in the right solid rocket booster joint under cold-weather launch conditions — was documented as a known risk that had been observed in multiple previous flights, normalised through a process of "acceptable risk" redefinition, and not escalated to management in adequate form before the January 28 launch. The Presidential Commission report on Challenger, like the CAIB report on Columbia, attributed the accident primarily to organisational and management failures in the handling of known information.

Two unrelated accidents, 17 years apart, produced by two different physical mechanisms, on a program that had invested extensively in safety analysis and corrective action between them — and both attributable primarily to the inability of the organisation's decision processes to handle the complexity of information flowing from the technical system's known risks. The Shuttle program did not cross CRIP in 1986 and then fix it. It was operating above its institutional CRIP continuously.

The Boeing 737 MAX Architecture#

The Boeing 737 MAX MCAS case presents a CRIP crossing in a different form: not the management of a large, legacy-complex system, but the addition of a specific new feature to a mature platform in ways that its interaction complexity was not adequately analysed.

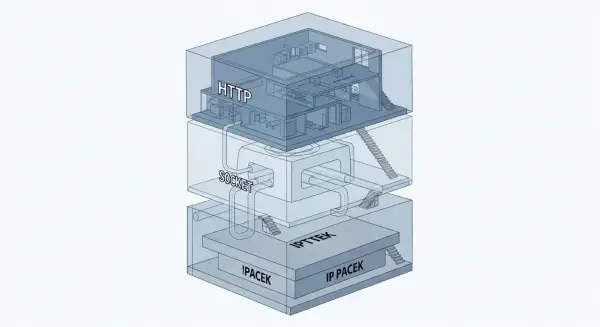

The 737 MAX required the MCAS system because its new LEAP-1B engines, larger and more fuel-efficient than the CFM56s on the previous 737 NG, had to be mounted further forward and higher on the wing to maintain ground clearance. This repositioning altered the aircraft's aerodynamic moment in certain high angle-of-attack flight conditions, causing a nose-up pitch tendency that the FAA's handling characteristics regulations required to be compensated. The engineering solution was MCAS — a software system that commanded nose-down pitch trim automatically when the flight control computer detected conditions matching the potential pitch-up tendency.

In the version of MCAS submitted for certification in 2017, the system relied on a single angle-of-attack (AoA) sensor input without cross-checking against a second sensor. If the single sensor malfunctioned in a way that indicated a higher AoA than was actually present, MCAS would activate when the aircraft was not in the pitch-up danger condition. The system could reactivate repeatedly — at 5-second intervals — if it detected the erroneous high-AoA condition continuing. The crew could counter MCAS activation by pulling back on the control column, but this only paused the activation; it would reactivate 5 seconds later if the sensor fault persisted. The only full countermeasure was a stab trim cutout procedure using a switch on the centre console.

The 737 MAX certification process did not analyse MCAS's interactions with the full avionics failure mode space because MCAS was initially characterised as a low severity system whose failure had been assessed as tolerable. This characterisation was retained through the certification even as MCAS authority — the range of trim movement it could command — was doubled during flight testing without a revised safety analysis of the expanded authority. The doubling of MCAS authority changed the system's consequences in ways that the original failure analysis did not reflect.

Two accidents — Lion Air 610 in October 2018 (189 deaths) and Ethiopian Airlines 302 in March 2019 (157 deaths) — resulted from AoA sensor failures triggering MCAS activations that the crews could not successfully counter before aircraft control was lost.

The Meta-Pattern of CRIP Crossing#

The Shuttle and 737 MAX cases share a meta-pattern that is present in essentially all documented CRIP-crossing disasters in complex engineering systems:

First, an original system was designed within a complexity envelope that its surrounding engineering and operational processes could manage. Second, complexity was added — through requirements growth (Shuttle's evolving mission envelope), feature additions (MCAS), or time-driven specification accumulation — without a corresponding upgrade to the complexity-management infrastructure surrounding the system. Third, the new complexity introduced interaction modes whose behaviour under failure conditions was not fully analysed, either because the analysis tools were inadequate, because the analysis was resource-constrained, or because the organisational incentives prioritised schedule and cost over analysis completeness. Fourth, a physical failure initiated a sequence whose propagation through the interaction modes of the complex system produced a consequence that the operational crew — cognitively bounded human beings operating under time pressure — could not interrupt.

The consistent institutional response to a CRIP-crossing accident is to add complexity to the socio-technical system surrounding the aircraft or process: new checklists, new procedures, new training requirements, new inspection protocols. Each of these additions is individually rational; each moves the system further past CRIP. The response to Challenger added configuration and risk management processes. The response to Columbia added foam debris inspection and on-orbit repair capability programmes. The response to the 737 MAX added MCAS sensor cross-checking, pilot training requirements, and expanded FMEA scope for future certifications.

The correct engineering response to a CRIP crossing is to reduce complexity — remove the feature or architectural choice that produced the interaction mode that killed people. This response is adopted very rarely, because it requires accepting that the capability associated with the feature is not achievable within the complexity management envelope of the existing system. It requires, in short, admitting that the system cannot do everything it was designed to do. The next post examines the engineering cultures that have consistently managed to stay below CRIP — not by avoiding complexity, but by architecting it.