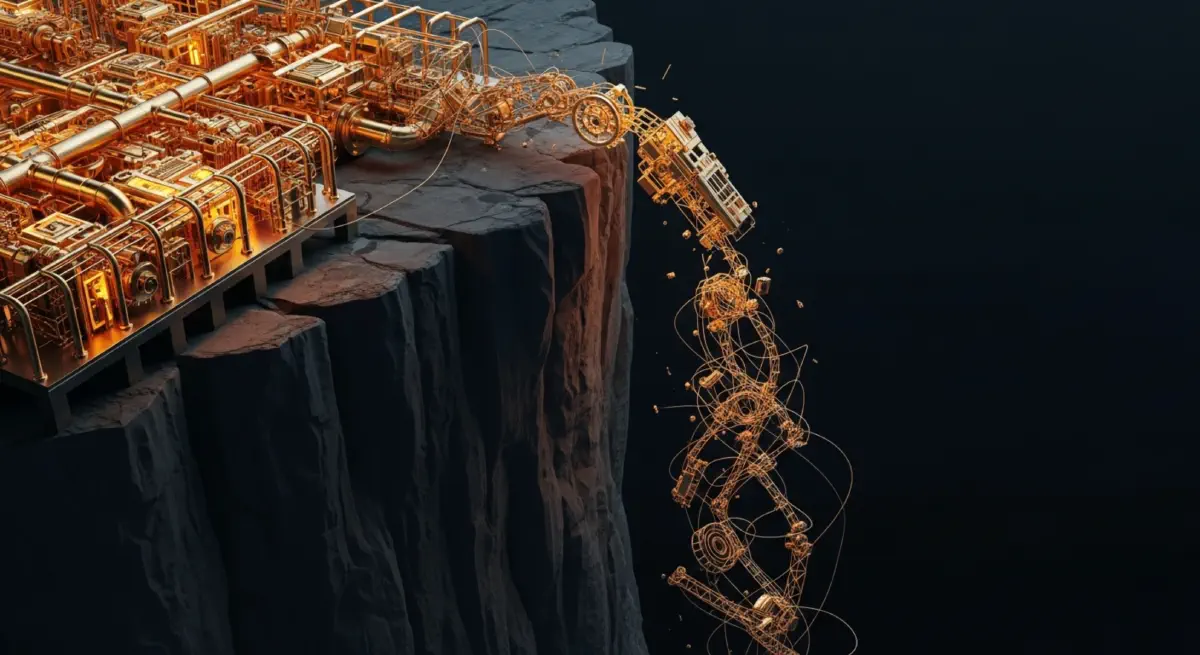

The Complexity Cliff

Key Insights#

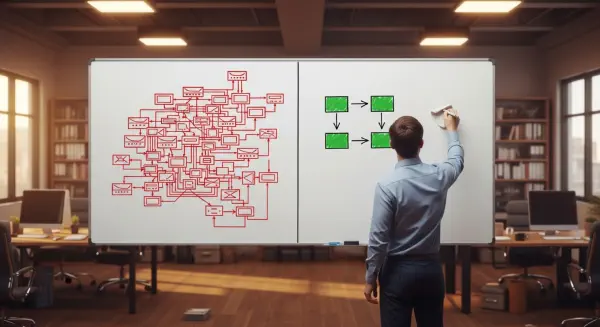

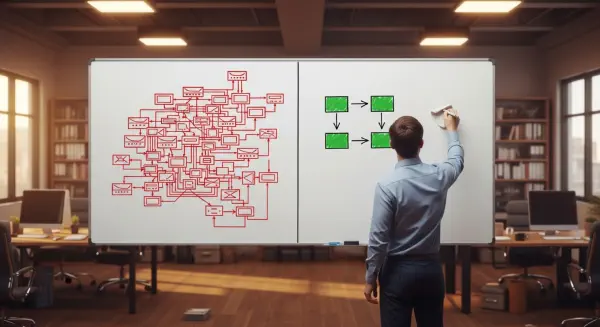

- The Complexity-Reliability Inversion Point (CRIP) is defined as: the system complexity level — measured in components, interfaces, modes, or decision branches — at which Δfailure modes / Δcomplexity unit exceeds 1.0. Below CRIP, adding one unit of complexity adds less than one unit of net new failure risk. Above CRIP, every additional feature adds more failure modes than the feature's added capability is worth.

- The Boeing 737 MAX MCAS had a CRIP-crossing architecture: a single system added to manage a known handling characteristic through software, but whose interactions with the existing fly-by-wire, autopilot, and airspeed sensing systems created failure mode combinations that could not be managed by crews trained on the pre-MCAS 737 configuration.

- The NASA Space Shuttle program, with approximately 33,000 specification documents and over 2,000,000 parts, was operating above its human-manageable CRIP. The Columbia and Challenger accident investigations both identified systemic complexity management failures — not individual errors — as the primary causal structures.

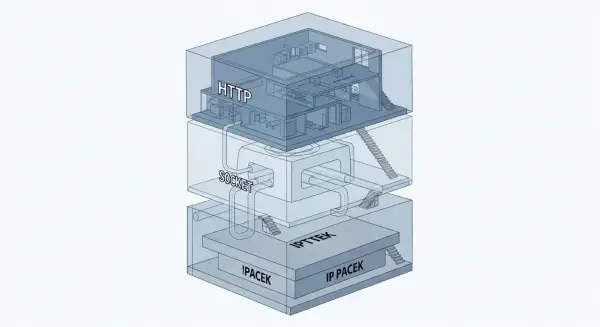

- TCP/IP's original design constraint — that routers would do as little as possible, with intelligence pushed to the endpoints — was an explicit CRIP management decision. The resulting internet has scaled to billions of nodes while maintaining core reliability because the protocol's complexity is bounded by this architectural principle.

- The primary organisational response to a CRIP-crossing failure is to add more complexity: new procedures, new checklists, new inspection steps, new compliance requirements. This response pushes the system further past CRIP. The correct response — removing the feature or interface interaction that crossed CRIP — is institutionally much harder.

References#

Perrow, C. (1984). Normal accidents: Living with high-risk technologies. Basic Books.

Leveson, N. G. (1995). Safeware: System safety and computers. Addison-Wesley.

Dekker, S. (2011). Drift into failure: From hunting broken components to understanding complex systems. Ashgate.

Columbia Accident Investigation Board. (2003). Report of the Columbia Accident Investigation Board, Volume 1. NASA.

Presidential Commission on the Space Shuttle Challenger Accident. (1986). Report of the Presidential Commission on the Space Shuttle Challenger Accident. US Government.

House of Representatives Committee on Transportation and Infrastructure. (2020). Final committee report: The design, development and certification of the Boeing 737 MAX. US Congress.

Torvalds, L., & Diamond, D. (2001). Just for fun: The story of an accidental revolutionary. HarperCollins.

Tanenbaum, A. S., & Wetherall, D. (2011). Computer networks (5th ed.). Pearson.

Reason, J. (1997). Managing the risks of organisational accidents. Ashgate.

Hollnagel, E., Woods, D. D., & Leveson, N. (Eds.). (2006). Resilience engineering: Concepts and precepts. Ashgate.

Berwick, D. M., Nolan, T. W., & Whittington, J. (2008). The triple aim: Care, health, and cost. Health Affairs, 27(3), 759–769.

Landrigan, C. P., Parry, G. J., Bones, C. B., Hackbarth, A. D., Goldmann, D. A., & Sharek, P. J. (2010). Temporal trends in rates of patient harm resulting from medical care. New England Journal of Medicine, 363(22), 2124–2134.

MacCormack, A., Rusnak, J., & Baldwin, C. Y. (2006). Exploring the structure of complex software designs: An empirical study of open source and proprietary code. Management Science, 52(7), 1015–1030.

Turner, B. A. (1978). Man-made disasters. Wykeham Publications.

Sagan, S. D. (1993). The limits of safety: Organizations, accidents, and nuclear weapons. Princeton University Press.

The Complexity Cliff – Part 3: The Hospital as Complex System

The Complexity Cliff – Part 2: The Modular Antidote