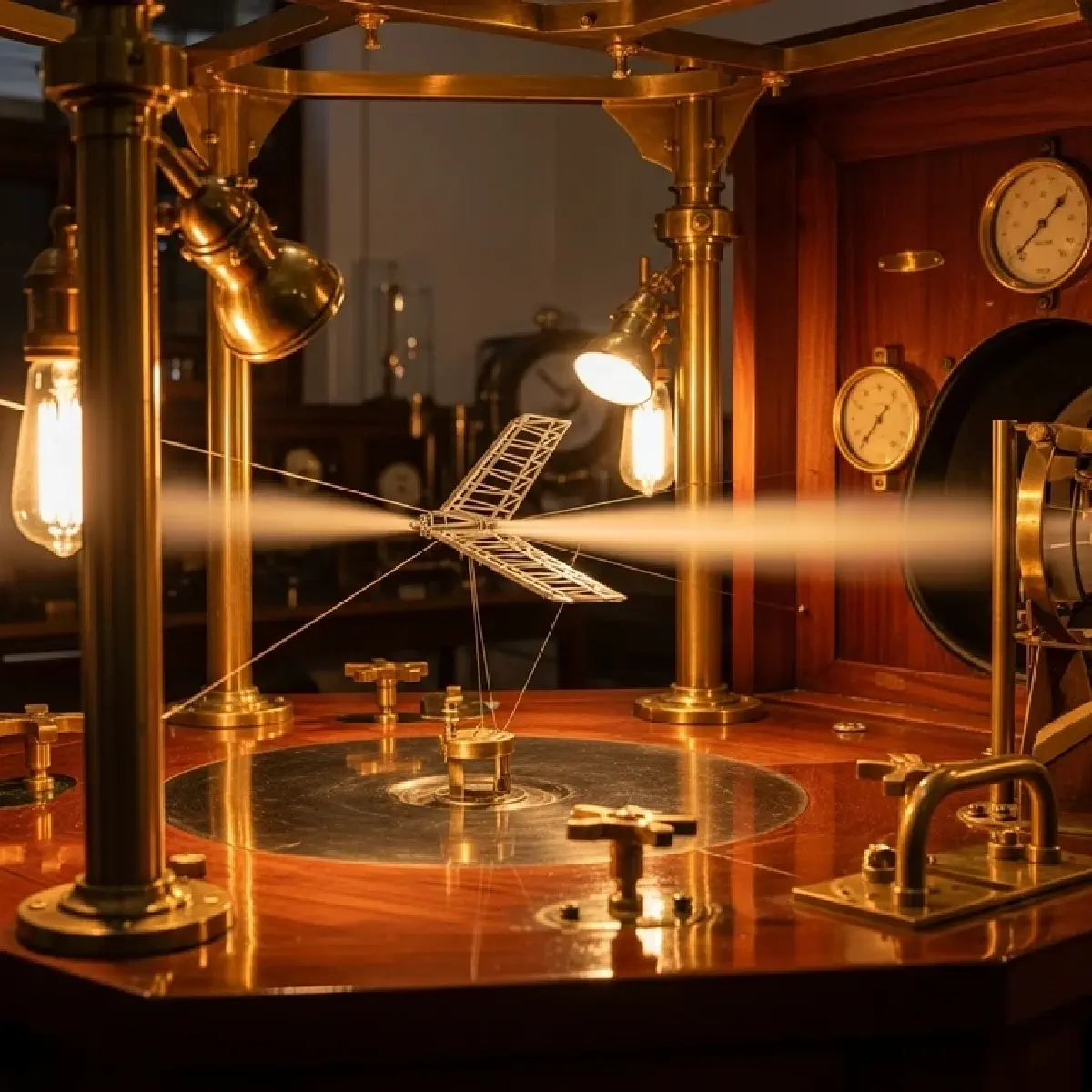

The Wright Brothers and the Power of the Wind Tunnel#

In the fall of 1901, Wilbur and Orville Wright reached a breaking point. The aeronautical data provided by their predecessors, like Otto Lilienthal, was riddled with errors. Lilienthal had conducted over 2,000 glider tests over 20 years, eventually losing his life in a crash because he didn’t understand the fundamental relationships between lift and drift. Rather than continuing to build and crash full-scale prototypes, the Wright brothers took a different approach: they built a wind tunnel. Over two months, they tested 200 models of different wing shapes, each just 3 to 9 inches (7.6 to 22.9 cm) long. They were the first to use modeling to predict performance before assuming the risk of flight.

This shift from physical trial-and-error to rigorous modeling and simulation remains the gold standard for modern systems engineering. Today, we face systems far more complex than a wood-and-canvas glider—systems where failure doesn’t just risk a single pilot’s life, but can trigger global financial collapses or environmental catastrophes. As we navigate this “System of Systems” era, the challenge is not just to build something that works, but to build something that remains resilient in the face of inevitable failure.

The Thesis of Proactive Resilience#

The central argument of this post is that resilience is not an accidental byproduct of good design; it is a meticulously engineered property. True resilience requires a three-pronged approach: rigorous reliability modeling to predict failure points, stochastic simulation to account for real-world uncertainty, and multi-objective decision analysis (MODA) to balance the inescapable tradeoffs between performance, cost, and risk. By embracing the “bathtub curve” of system life cycles, engineers can mitigate risks before they occur, ensuring that the final system delivers value across its entire useful life.

The Mechanics of Longevity: Reliability and Risk#

Explaining the System: The Bathtub Curve and the Math of Survival#

Reliability is the probability that a system will operate properly for a specified amount of time under stated use conditions. To understand a system’s lifespan, engineers use a conceptual model known as the “bathtub curve”. A system’s life is divided into three distinct phases: the Infant Mortality period, where manufacturing defects cause early failures; the Useful Life period, where failures occur randomly due to external stress; and the Wear-Out period, where the system’s components begin to degrade rapidly.

For a complex system, such as a $250,000 (230,000€) mobile rocket, engineers strive to keep the system in that middle “useful life” period for as long as possible. This is achieved through techniques like Environmental Stress Screening (ESS), which subjects items to extreme temperature or vibration to weed out weak units before they reach the consumer. By calculating the Mean Time to Failure (MTTF), engineers can develop maintenance strategies that replace parts just before they enter the wear-out zone. This is the difference between reactive repair and proactive system management.

Complicating Factors: Systemic Risk and the Ghost in the Machine#

While individual component reliability is well-understood, “systemic risk” is the new frontier of failure analysis. Systemic risk is not a failure of a single unit, but the potential for the entire system to collapse due to interdependencies. The financial crisis of 2007–2008 is the textbook example: no single regulatory authority had oversight of the entire system, allowing a failure in the derivatives market to spill over into global banking. In systems engineering, these vulnerabilities often hide in the “seams”—the interfaces between different subsystems where sharing protocols and compatibility layers are most likely to fail.

Furthermore, engineers must account for “dependent scoring uncertainties”. For example, if you are designing a rocket, a technical failure in the fin material doesn’t just affect the fins; it simultaneously degrades both the rocket’s range and its accuracy. To manage this, we use Monte Carlo simulations, which run thousands of “what-if” scenarios to produce a probability distribution of the system’s total value. This allows a decision maker to see that while “Global Lightning” might be the highest-performing candidate, it also carries the highest uncertainty, represented by a wider spread in its potential outcomes.

Tracing the Consequences: The Logic of the “One-Hoss Shay”#

The ultimate consequence of poor systems thinking is a system that fails unevenly, leading to wasted resources. In the 1858 poem The Deacon’s Masterpiece, Oliver Wendell Holmes describes a carriage built so logically that no single part was weaker than another. It ran for exactly 100 years and then “went to pieces all at once”. While a humorous fiction, the “One-Hoss Shay” represents a fundamental engineering goal: Reliability Allocation.

If you have a series system—where the failure of any one part kills the whole machine—the most efficient way to improve reliability is to strengthen the weakest link. Conversely, in a parallel system—where you have redundant backups—you should improve the strongest component to maximize the probability that at least one unit survives. Using MODA, engineers can perform tradeoff analyses to determine if adding a $10,000 (9,200€) redundant sensor is more valuable than spending that same money to increase the rocket’s thrust. This “Value vs. Cost” plot identifies the Efficient Frontier, ensuring that the decision maker never selects a “dominated” solution that offers less value for more money.

Synthesis: The Future of the Honest Broker#

As we conclude this exploration of the “Architect’s Dilemma,” it becomes clear that the systems engineer is the technical “honest broker” for any organization. They are the ones who must tell the program manager that the “last 10% of performance generates one-third of the cost and two-thirds of the problems”. In a world defined by increasing complexity, security concerns, and privacy challenges, this role is more critical than ever.

The “One-Hoss Shay” reminds us that logic is the foundation of design, but the Wright brothers remind us that data-informed modeling is the foundation of survival. Systems engineers determine what should be, allowing engineering managers to determine what will be. Looking forward, the ability to build systems that are not just high-performing, but resilient and secure against adaptive adversaries, will be the defining challenge of our generation. We must move beyond “symptom-level” fixes and embrace the rigorous, iterative, and holistic discipline of systems engineering to ensure that when “the system votes last,” it votes in our favor.