In 2013, researchers at the Cambridge University Psychometric Centre claimed that by analyzing just 68 Facebook “likes,” they could predict a person’s ethnicity, sexual orientation, and political affiliation with high accuracy. One study even found that these computer-based personality assessments were more accurate than those made by a user’s real-world friends or even their spouse. This discovery marked the birth of a digital “Panopticon”—a system where a single watchman can surveil millions of people while remaining invisible to them.

Cambridge Analytica’s “Project Ripon” was the tactical attempt to scale this academic research into a weapon for political campaigns. The project was named after Ripon, Wisconsin, the birthplace of the Republican Party, and its goal was to create a “gold standard” of understanding personality from digital footprints. To feed this system, the firm needed data on an unprecedented scale, leading them to partner with Dr. Aleksandr Kogan and his company, Global Science Research (GSR).

The result was the harvesting of up to 87 million Facebook profiles through a simple personality quiz app called “This Is Your Digital Life”. While only 270,000 to 320,000 people actually took the quiz, Facebook’s “permissive” API allowed the app to “hoover up” the private data of all their friends without their knowledge or consent. This massive pool of data became the raw material for a system designed to “target the inner demons” of the American voter.

The Thesis of Algorithmic Siege#

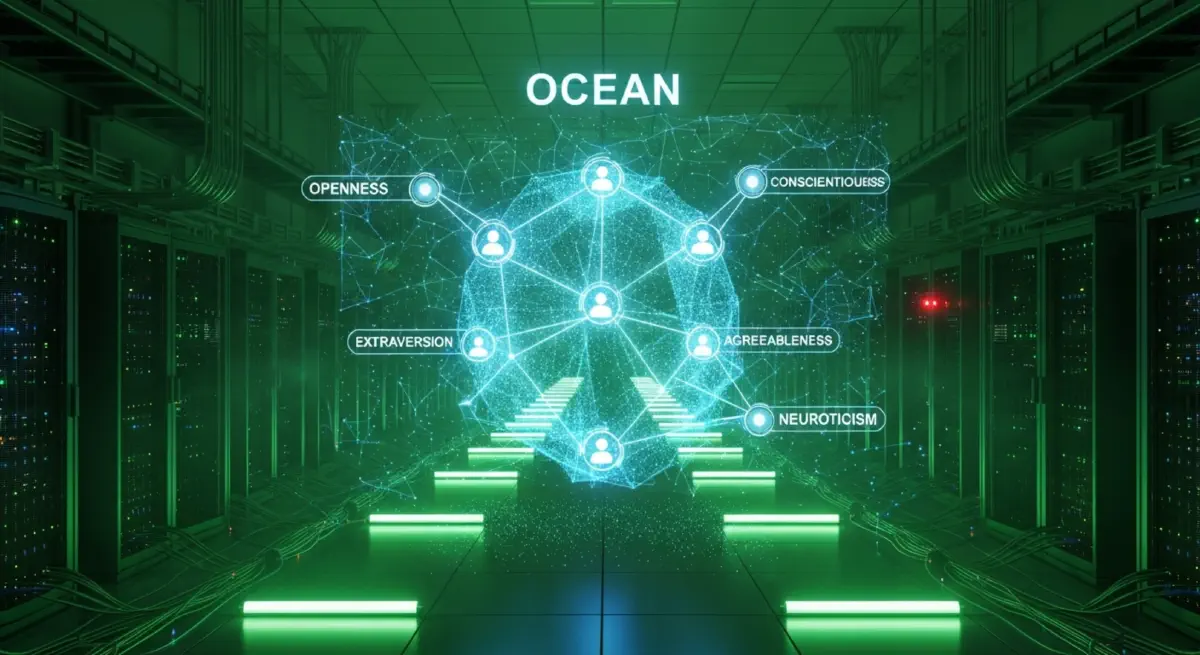

The Data Incursion Thesis argues that by combining massive digital datasets with the “Big Five” OCEAN personality framework, political actors can bypass rational debate and manipulate behavior at scale. This system transforms social media platforms from communication tools into “weapons-grade” influence machines, where algorithmic precision is used to trigger specific reactions in target audiences without their awareness. This approach represents a comprehensive violation of informed consent and transparency, transforming millions of users into passive data sources for commercial and political profit.

The Mechanism of the OCEAN Machine#

At the heart of the psychographic model is the OCEAN framework, which categorizes personality into five traits: Openness, Conscientiousness, Extraversion, Agreeableness, and Neuroticism. Cambridge Analytica used these scores to segment the electorate into narrow categories. For example, they identified “timid traditionalists,” who were high in neuroticism and conscientiousness, and “relaxed leaders,” who were low in neuroticism and high in extraversion. By understanding these traits, campaigns could craft advertisements that resonated on a subconscious level, bypassing the voter’s rational defenses.

Micro-targeting is the delivery of personalized messages to specific individuals based on their inferred characteristics. Cambridge Analytica claimed to have over 5,000 data points on every adult in the United States, including census records, credit scores, and magazine subscriptions. This “Big Data” was combined with Facebook “likes” to create a holistic psychological profile for over 230 million Americans. The firm’s algorithms identified correlations between digital behaviors and personality types, allowing for the automation of thousands of tailored ads.

A neurotic audience might receive a gun rights message focused on the fear of home invasion, while an agreeable audience would see the same issue framed as family tradition and heritage. The “Ripon” software was designed as an all-in-one voter management platform. It was intended to track every interaction with a voter, providing volunteers with a complete “voter file” when they knocked on a door. This file would include the voter’s personality type and the specific messaging strategy most likely to persuade them.

The Crucible of Matrix Sparsity#

One major complication for the psychographic thesis is the technical reality of “matrix sparsity”. While the firm claimed to have 5,000 data points per individual, internal reports showed that most of those records were actually blank. Many data features had a coverage rate of only 2% to 5%, meaning that 95% to 98% of voters had no recorded data for that specific trait. This suggests that the “5,000 data points” claim was largely a marketing exaggeration used to attract wealthy donors like Robert Mercer.

Furthermore, experts have questioned whether psychographic targeting “worked” as promised. Professor Eitan Hersh testified before Congress that the correlation between social media “likes” and personality traits was weak, and that political orientation is a much stronger predictor of voting behavior than personality. Internal accounts and campaign staff described the software as “comically bad” and “snake oil”. The Trump campaign reportedly relied more on traditional Republican National Committee (RNC) data than on Cambridge Analytica’s proprietary tools.

However, Christopher Wylie countered that these skeptical claims contradict “copious amounts of peer-reviewed literature” in top scientific journals. He argued that even Facebook itself applied for patents on determining user personality characteristics from social networking communications. Whether or not the technology was fully functional in 2016, the intent and the scale of the data misappropriation were unprecedented, representing a massive failure of data ethics and institutional oversight.

Institutional Collapse of Consent#

The consequence of these operations was the normalization of a “surveillance business model” in politics. The UK Information Commissioner concluded that the company’s data protection practices were lax, with little thought for effective security measures. The investigation uncovered a “disturbing disregard for voters’ personal privacy” across the entire political campaigning ecosystem, from data brokers like Emma’s Diary to social media platforms like Facebook. The $140,000 fine issued to Emma’s Diary for selling mother-and-baby data to the Labour Party illustrates the pervasive nature of this data extraction.

The scandal revealed that Facebook was aware of the improper data harvesting as early as 2015 but failed to notify affected users or take meaningful remedial action for three years. Instead, the company relied on “certification” from the violators that the data had been destroyed. This “digital gangster” behavior allowed millions of UK and US users to remain at risk of misuse. The result is a system of “voter surveillance by default,” where citizens can only make informed choices if they are sure they have not been “unduly influenced” by opaque algorithms.

Beyond the West, these big-data weapons were exported as a new form of “digital colonialism”. In Kenya and Nigeria, Cambridge Analytica reportedly used psychographic micro-targeting to influence voter perceptions through attack advertisements and sponsored posts. Human behavior and data are now appropriated as “raw material” to benefit corporations in the Global North, mirroring historical patterns of land and labor extraction. The lack of ownership rights over locally produced datasets in the Global South reduces national autonomy and erodes sovereignty.

Synthesis: The Industrialization of Micro-Targeting#

The true legacy of the psychometric model is the “industrialization of micro-targeting”. While social media companies have restricted some API access since the scandal, the amount of information users leave online has exploded. Modern AI tools, including large language models, can now generate millions of personalized messages in a loop, supercharging the process of psychological manipulation. We are living in an era where the “Personalization-Privacy Paradox” defines our digital existence: we enjoy personalized services while our privacy is weaponized against us.

The Cambridge Analytica affair proved that ostensibly “innocuous” platform features could be repurposed for mass manipulation. As digital footprints become more detailed, the risk of “information warfare” being brought to civilian populations remains a primary threat to democracy. We must recognize that technology is not just an engineering issue; it is a national security and human rights issue. The ultimate defense is moving ethics from “paper to practice” through enforceable regulatory frameworks grounded in a “duty of care” for individuals.

Without such reform, the digital Panopticon will continue to expand, turning the electorate into a “passive data source” for those who hold the keys to the data arsenal. The challenge is to dismantle the extractive logic of the modern data economy and reclaim the digital commons for the service of citizens. As we walk into a future driven by algorithms, we must ensure that technology serves humanity, rather than the other way around.